Content

Blogs

The Swap

Manufacturing has long been fertile ground for automation. But as AI moves from the experimental fringes into production lines and engineering workflows, a more nuanced conversation is emerging — not just about what AI can do, but about what happens when it goes wrong, and how trust is built at the grassroots level.

As ManufactureAI has deployed its solution across B2Bs spanning low-volume to high-volume manufacturing cycles, two consistent themes surface from the engineering floor: excitement at the top, and measured skepticism below. That gap between leadership enthusiasm and operator hesitation is where the real adoption challenge lives.

"We need this right now."– Process Engineer, Veev by Lennar

The urgency is real. But so is the caution. After extensive deployment across customer environments, three primary risk categories have emerged:

Output Accuracy — can the model be trusted to get it right?

Operator Deskilling — what happens to human expertise over time?

Model Behavior at Scale — does performance hold when volume and variability increase?

In this blog we talk about 1

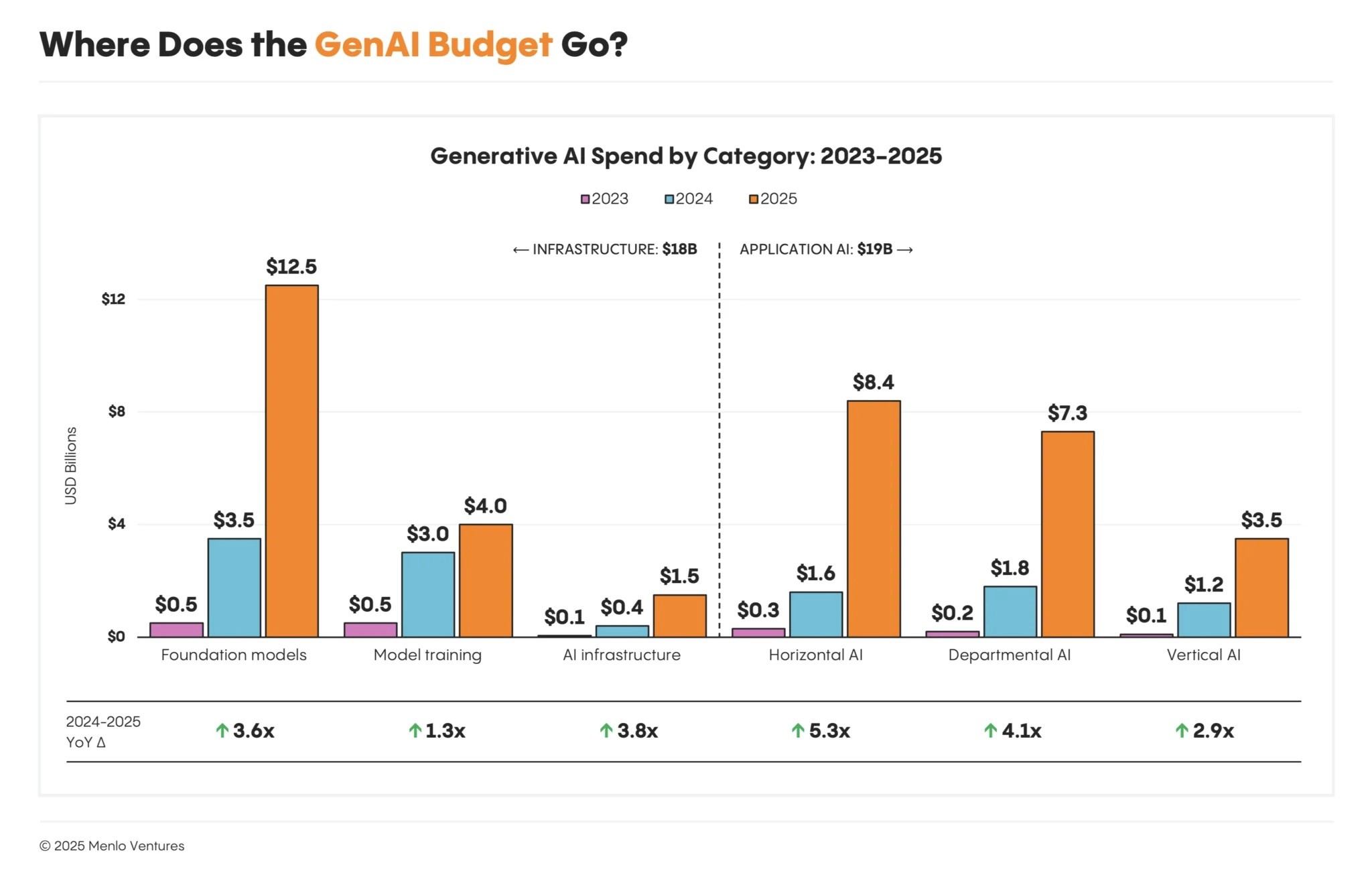

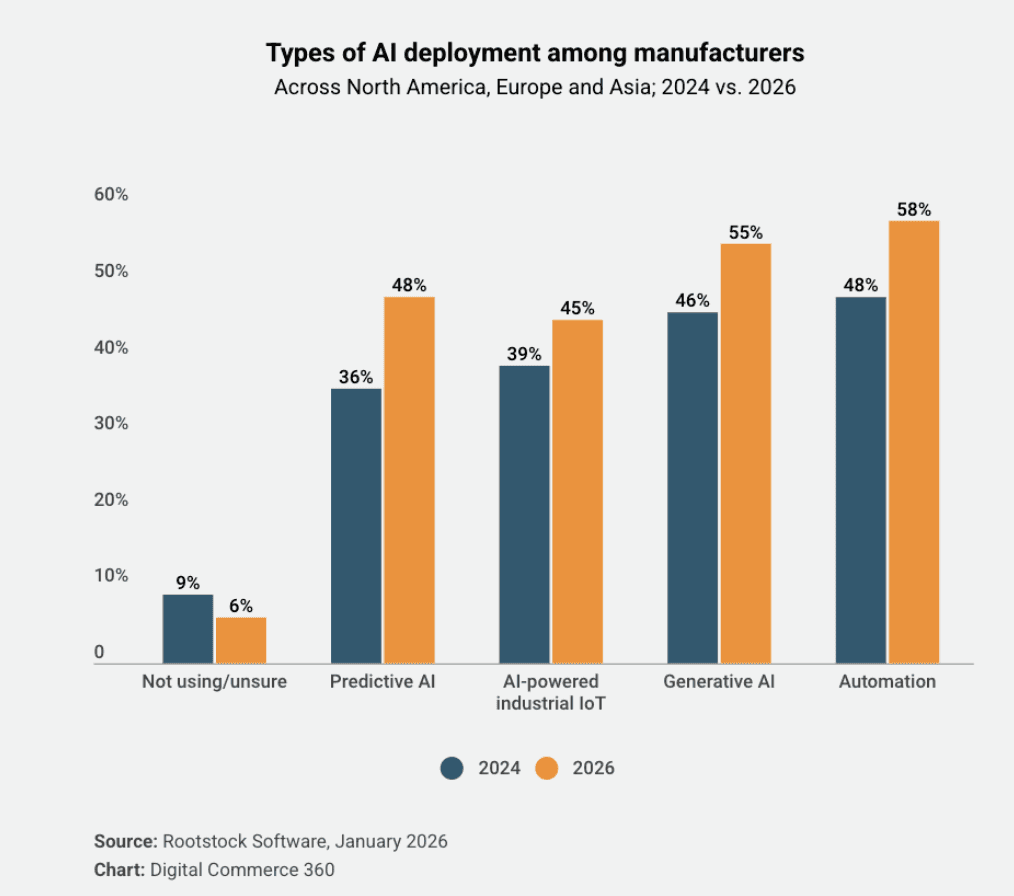

The Momentum Is Real – But So Are the Stakes

Despite the hesitations, one thing is clear: the industry is moving. The question is no longer whether AI will be adopted in manufacturing — it is whether companies can deploy it responsibly enough to actually capture the value on offer

77% | $3.70 | $62B |

|---|---|---|

of manufacturers now use AI solutions - up from 70% in 2024 Source: Netguru, 2025 | returned for every $1 invested in GenAI by early enterprise adopters Source: McKinsey / Fullview, 2025 | projected global AI-in-manufacturing market by 2032, growing at 35% CAGR Source: Aristek Systems, 2025 |

Manufacturers that apply machine learning are 3x more likely to improve their key performance indicators, and companies deploying AI-powered process automation report an average 23% reduction in downtime. Productivity improvements of 20–30% are now consistently reported across early adopters.

But the headline adoption figures come with a critical asterisk. Even as 78% of organizations report using AI in at least one business function — up from 55% just two years ago — the failure rate tells a more sobering story

70–85%of AI initiatives fail to meet expected outcomes | 42%of companies abandoned most AI initiatives in 2025 — up from 17% in 2024 |

Source: Fullview, 2025

The gap between companies capturing real returns and those abandoning proof-of-concepts comes down to one variable: deployment discipline. Early movers achieving $3.70 back per dollar — and top performers reporting $10+ returns — share a common profile: defined success metrics from day one, human oversight baked in, and constrained rollout sequencing rather than broad deployment.The largest gains came among predictive AI, supply chain planning and process optimization

The Takeaway: Manufacturing AI adoption is not a question of 'if' — 77% are already in. The question is whether deployments are architected carefully enough to stay. The companies winning are not deploying faster; they are deploying smarter |

|---|

Is this Problem Worth Solving?

The manufacturing industry is still producing work instructions by hand — in Word documents, PDFs, and shared drives. For any hardware product launch, these documents are non-negotiable: without them, operators cannot assemble, inspect, or ship. Yet the process of creating them is manual, repetitive, and prone to inconsistency.

ManufactureAI's core offering is to free up engineering headspace by automating the production of manufacturing instructions — the monotonous-but-essential work that delays NPIs and burns senior engineering hours. The pitch is simple: modern tooling with measurable productivity gains.

"What are some ways you measure and improve AI accuracy?"– Lead NPI Engineer, Veev by Lennar

That question, raised on the floor during a deployment, captures the core trust challenge. It is not a rejection of AI - it is a demand for accountability. And it deserves a serious answer.

How ManufactureAI Constrains Model Behavior

Rather than deploying generalist AI and hoping for the best, ManufactureAI's approach centers on architectural constraints that define not just what the model does, but how its outputs are measured. Accuracy is broken down into discrete, auditable dimensions:

Accuracy Evaluation Framework

Accuracy Dimension | Weight | What It Catches |

Correct order of operations | 20% | Sequence logic errors that cause downstream rework |

Correct part recognition | 20% | Wrong part callouts that could cause assembly failures |

Correct action words | 20% | Ambiguous verbs that leave operator interpretation open |

Semantic meaning preserved | 20% | Paraphrased steps that lose critical procedural intent |

Missing or redundant steps | 20% | Gaps or bloat that affect cycle time and quality |

This decomposition matters because it transforms 'is the AI accurate?' from a yes/no gut check into an auditable, improvable metric. Each dimension can be measured independently, trended over time, and targeted for improvement

Modern Risk Framework for AI in Manufacturing

The manufacturing industry has robust frameworks for managing product development risk — stage gates, FMEA, design reviews, pilot runs. AI deployment deserves the same rigor. Below is an expanded view of the risk framework ManufactureAI operates within, updated with the latest technical approaches in production AI systems.

1. Define and Deploy Accuracy Metrics from Day One

Modern production AI systems increasingly rely on structured evaluation frameworks — often called 'LLM evals' — that score model outputs against known reference answers. The key technical approaches include:

Reference-based evals: compare AI outputs to human-written gold standards using embedding similarity and exact-match scoring.

LLM-as-judge: use a secondary model (or the same model) to score outputs on defined rubrics — particularly effective for semantic accuracy assessment.

Regression testing: maintain a dataset of known inputs and expected outputs; run automatically on every model update to catch regressions.

Slice-based analysis: measure accuracy broken down by product type, complexity level, and manufacturing stage to surface hidden failure patterns.

2. Hallucination Minimization

Hallucination — the model generating confident but fabricated content — is the most dangerous failure mode in manufacturing documentation. The latest technical strategies to minimize it include:

Retrieval-Augmented Generation (RAG): ground model outputs in retrieved source documents (BOMs, CAD specs, prior work instructions) rather than relying on parametric model memory alone.

Constrained decoding: restrict model output vocabulary or format using structured output schemas (e.g., JSON with required fields), preventing free-form generation.

Confidence scoring: use token-level log probabilities or ensemble models to flag low-confidence outputs for human review.

Source attribution: require the model to cite which input document each step was derived from, making hallucinations detectable during review.

3. Human-in-the-Loop Model Evals

Fully automated evaluation catches systematic errors, but human reviewers catch the subtle domain-specific failures that automated metrics miss. The most effective HITL architectures include:

Pattern matching loops: identify common error patterns across human reviews and add them to the automated eval suite, progressively shrinking the human review burden.

Active learning: route edge-case outputs to human reviewers and use those annotations to fine-tune the model on failure cases.

Disagreement sampling: when model confidence is low or when two independent runs produce different outputs, escalate to human review automatically.

Calibrated trust thresholds: define which output categories can be auto-approved vs. which always require sign-off, based on downstream risk (safety-critical steps always require human review)